Data collection

A solution that meets your challenges

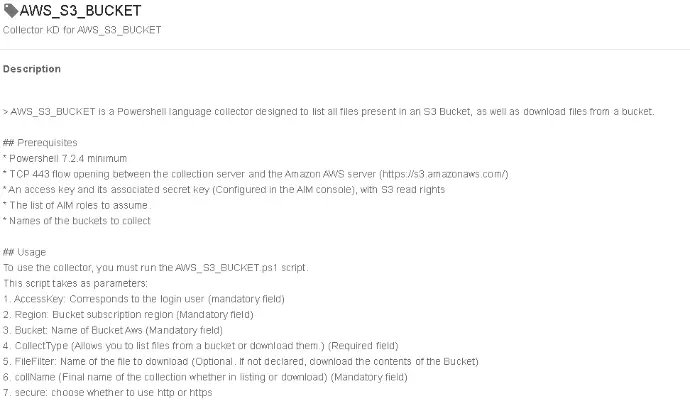

Our platform consolidates all your data sources, whether they are technical (Active Directory, SCCM, antivirus, hypervisors, Azure cloud, AWS…), management-related (SAP, invoices, fixed assets), or derived from external files (Excel, PDF). With a scheduled local application and over 100 ready-to-use collectors, your data is centralised and made reliable.

You thus benefit from a comprehensive discovery and overview of your CIs and all the essential attributes for the effective management of your fleet.

Discovery of your CIs

How many servers, workstations, or applications do you actually have in your information system?

The answer almost always depends on the source you consult. Active Directory, CMDB, antivirus, monitoring tools, SCCM, Airwatch or Satellite… each provides a partial view and the discrepancies are often quite significant.

If you have never compared, on the same day, the figures from your different solutions, we invite you to do so: you will be surprised by the differences between auto-discovery, AD, the CMDB, and the technical consoles.

The only way to obtain a clear and reliable view is to consolidate all your data sources. This approach allows you to identify all the CIs present in at least one source, to spot those that exist everywhere (therefore validated) and, conversely, to highlight those to be decommissioned.

The more integrated sources there are, the greater the accuracy of the inventory. By adding, for example, your asset files or your supplier invoices, you can not only enhance the reliability of your inventories but also verify the consistency between your costs (maintenance, outsourcing...) and the reality of your fleet.

With this approach, you transform fragmented and sometimes contradictory data into a unique, consolidated, and actionable vision, which becomes the foundation for effective management and sustainable cost reduction.